Google’s image recognition software is an incredible feat of engineering. Based on the structure of a biological brain, the ‘artificial neural network’ (ANN) consists of 20–30 stacked layers of artificial ‘neurons’, each neuron talking to the next to decipher what it can ‘see’ in a given image.

Like a human brain, the ANN is ‘trained’ to recognise objects and elements of a picture, with the neurons constantly adjusting and learning until together, they are able to accurately recognise, say, a dog, a banana, a tree.

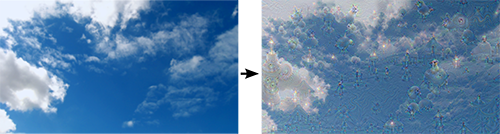

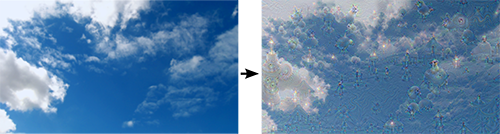

But the ANN is not yet perfect. In an effort to understand precisely what is happening at each layer of the network, programmers decided to try turning the network upside down: instead of the ANN simply recognizing elements of an image, would it be able to recreate the image?

The results were surprising – often beautiful, and sometimes pretty creepy!

You see, while our human brain can look at clouds in the sky and sometimes imagine that they look like a bird, or a heart, or a face, we know that clouds are clouds. But the ANN doesn’t know that, yet.

When presented with a picture of clouds and asked to recreate it, this happened:

Read the rest of this entry »